Scaling AI-Generated Apps: Performance, Testing, CI/CD

AI can assemble feature-rich code in minutes, but real value appears when that code survives traffic spikes, audits, and on-call nights. Whether your stack came from a fullstack builder AI or a human team augmented by code generation, treat scale as a product feature. Start with clear SLOs, budgets, and a plan. And never blindly trust an authentication module generator-verify, extend, and test it.

Performance-first architecture

Design for latency before feature flourishes. Minimize chatty calls, batch prompts, and cache aggressively at the edge. Stream responses to cut time-to-first-token, and introduce queues for bursty inference.

- Define budgets: p95 ≤ 300 ms for API core, ≤ 1.5 s for LLM endpoints, error rate ≤ 0.5%.

- Profile weekly: generate flamegraphs, pin hot paths, and cache prompt templates plus embeddings.

- Protect databases: use connection pools, read replicas, and backpressure; enable circuit breakers around model providers.

- Frontend: ship hydration islands, defer non-critical scripts, and record RUM to validate gains.

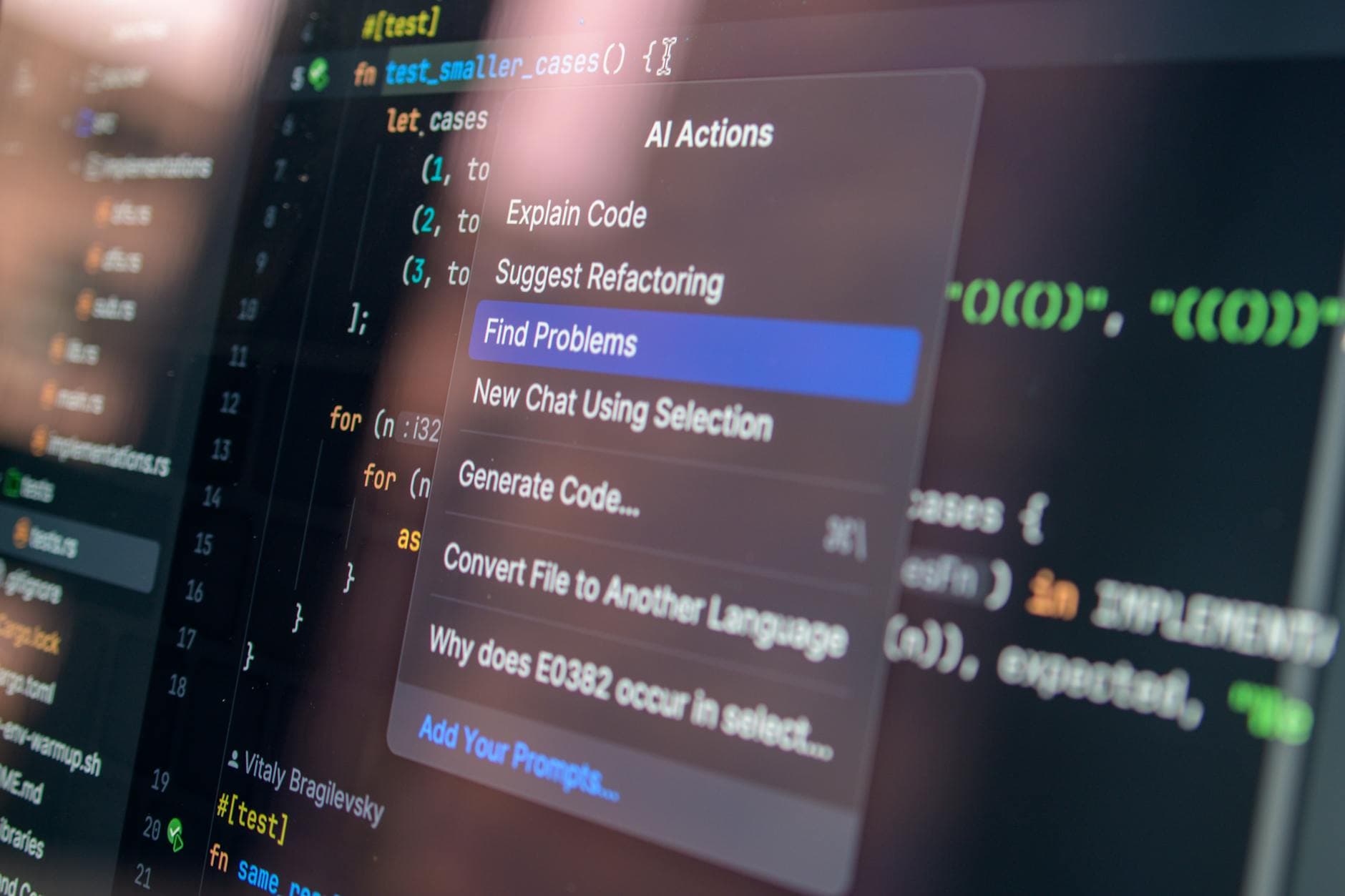

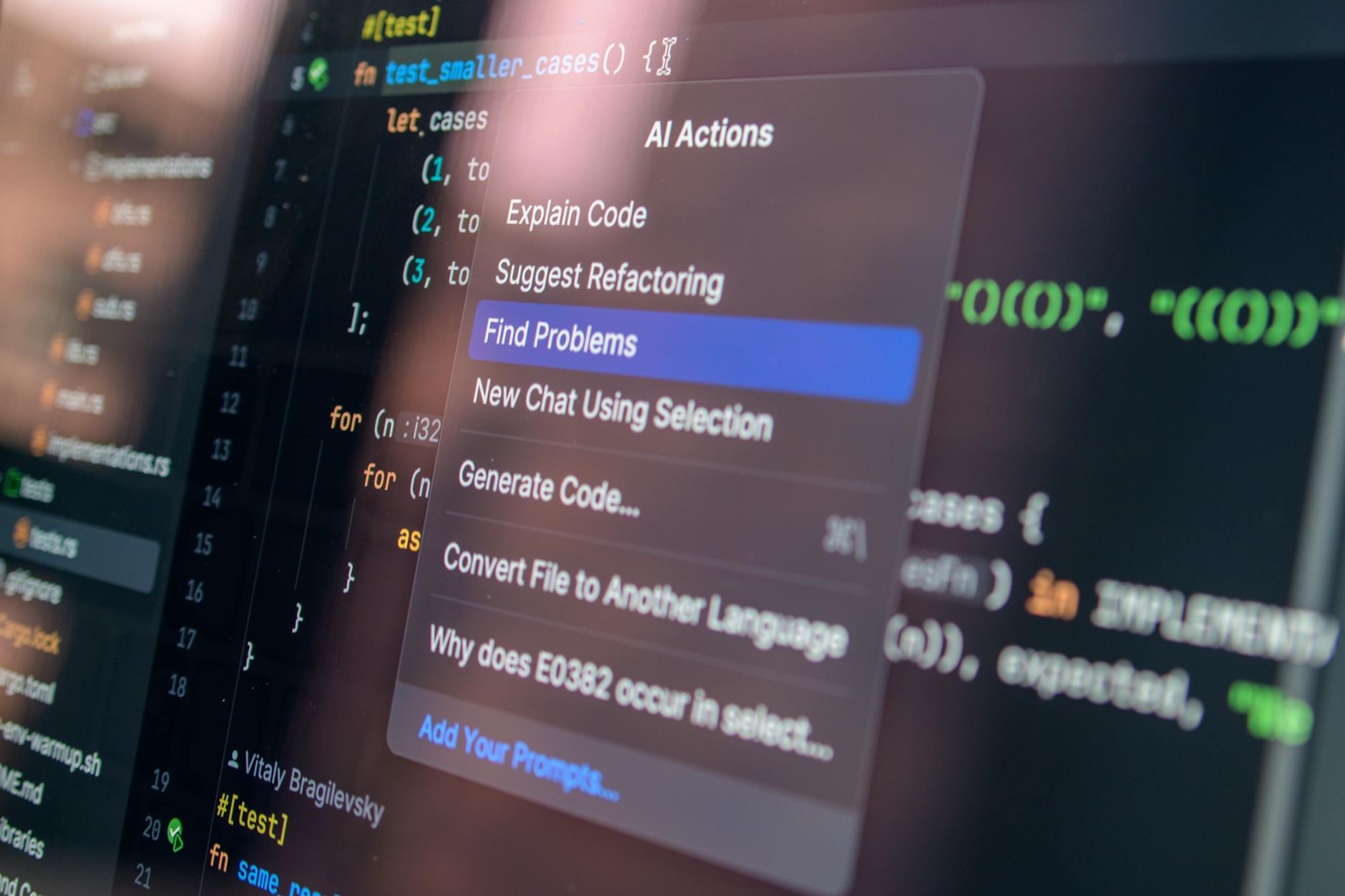

Deterministic testing for non-deterministic AI

Stabilize variability with golden datasets, seed control, and offline snapshots of model outputs. When models change, compare deltas, not vibes.

- Contract tests: enforce JSON schemas for prompt I/O; reject unshaped responses fast.

- Property tests: check idempotency and pagination using randomized fixtures; enforce idempotency keys on write handlers.

- Synthetic users: simulate timeouts, token exhaustion, and malformed inputs; add chaos on provider failures.

- Infra tests: run services in Testcontainers; verify migrations on disposable databases.

- Privacy checks: unit-test redaction, PII detectors, and audit log scrubbing.

Security hardening for AI-built apps

Treat generators as scaffolding, not assurance. Map controls to OWASP ASVS and supply-chain baselines. If an authentication module generator produces login flows, require passkeys or OIDC, rotate JWTs with short TTLs, and pin scopes.

- Shift left: SAST, dependency, and secret scanning on every PR; DAST on ephemeral envs.

- Runtime: enforce mTLS, WAF rules for prompt injection payloads, rate-limit by user and model, and add tamper-evident audit trails.

- Data: classify prompts/outputs; encrypt at rest; apply row-level security to traces.

CI/CD blueprint that won't flinch

Codify rigor so releases are boring and rollbacks fast.

- Lint, type-check, and build once; sign artifacts.

- Run unit, contract, property, and golden-set evaluations with pass/fail budgets.

- Spin ephemeral previews; execute smoke, DAST, and load (steady + spike + soak).

- Policy gates: IaC scans and OPA checks; require migration dry-runs.

- Progressive delivery: canary 1%→10%→50% with automatic rollback on SLO breach.

Observability and cost control

Trace every request, including model spans with tokens, temperature, cache hits, and latency. Build dashboards for p95s and retries. Alert on budget drift.

Bottom line

Shipping faster is easy; scaling safely is culture. Pair automation with human review, prioritize SLOs, and your AI-built app will stay fast, secure, and predictable under load.