Scaling AI-Generated Apps: Performance, Testing, and CI/CD

When an AI agent scaffolds your app, shipping isn't the hard part-scaling is. Here's a pragmatic playbook that treats latency, correctness, and release safety as first-class features.

Backend choice: Supabase vs custom backend with AI

For teams moving fast, Supabase wins week one: Postgres, Row Level Security, Edge Functions, and auth in hours. With AI-heavy workloads, it shines when you push embeddings, vector indexes, and queue workers behind a single platform. Go custom when you need cross-region write control, bespoke rate limiting per API key, or GPU-aware job scheduling. A hybrid works: keep user/data in Supabase, offload LLM orchestration and feature stores to a service layer (NestJS/Go) with Redis Stream backpressure.

Adalo alternative for production scale

Low-code speeds validation, but as DAU climbs past 10k, you want typed contracts, zero-downtime migrations, and observability. An effective Adalo alternative is a thin React/Flutter client over a typed API (tRPC or OpenAPI), managed Postgres (Supabase), and a dedicated AI worker tier. Keep builder speed with internal generators: "screen:create" commands and prompt templates versioned alongside code.

Community platform builder AI patterns

Community apps spike unpredictably. Use a community platform builder AI to draft onboarding flows and moderation prompts, then lock them behind feature flags. Precompute trending topics via hourly jobs, and use a vector store for duplicate-post detection to protect feed quality.

Performance guardrails

- Target p95 latency budgets per surface (feed 300ms, search 600ms, generation 2s) and fail open with cached summaries.

- Warm LLM contexts: maintain small prompt caches keyed by intent; refresh every 15 minutes.

- Batch token-heavy work: group similar prompts in the queue to maximize context reuse.

- Use RLS and generated columns in Supabase to push simple filters to SQL instead of the app layer.

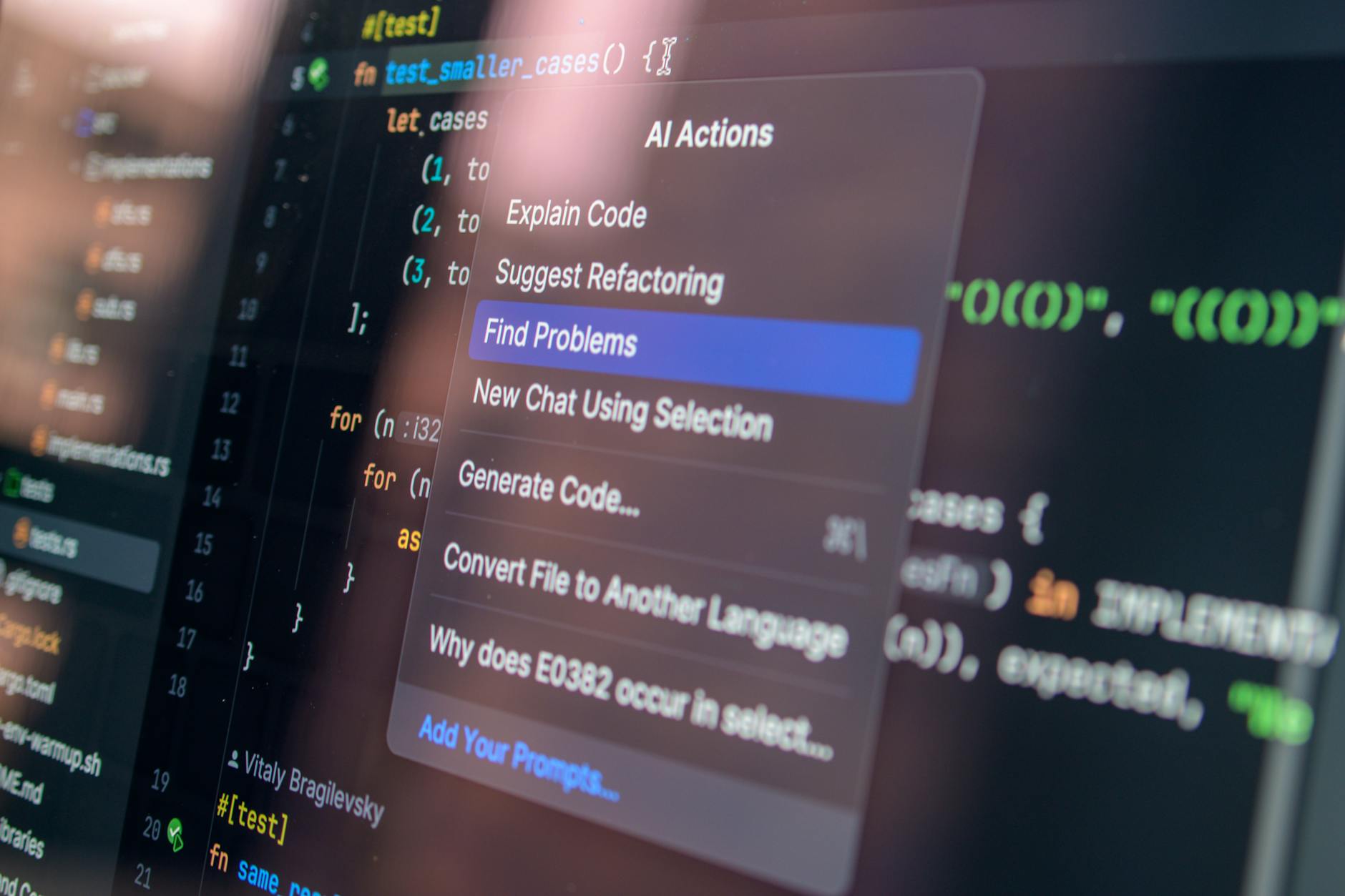

Testing that catches AI regressions

- Golden tests: freeze inputs/expected outputs for top prompts; diff with semantic similarity thresholds.

- Property-based tests for tools: "function calls must be idempotent and bounded to 2 retries."

- Synthetic personas: replay 1k user journeys nightly with randomized content lengths and languages.

CI/CD you can trust

- Two-stage canaries: ship to 5% of traffic gated by org ID; auto-promote on SLO pass (p95, error rate, token cost).

- Schema drift control: run Prisma/Migra checks; require backward-compatible migrations by default.

- Prompt ops: store prompts in Git with checksums; block merges if evals dip below baseline.

- Cost budgets: CI fails when forecasted token spend per request exceeds target.

Rollout checklist

Before each release: load-test with k6 at 2x peak QPS, validate cache hit rates, rotate API keys, and snapshot vectors. Bring product, data, and SRE into the same review to ship fast without fear.

Document learnings, tag incidents with root causes, and feed them back into prompts and runbooks every week.