Scaling AI-generated apps: performance, testing, CI/CD

An AI can assemble features fast, but scale demands discipline. Whether you use a text to app platform for internal tools, a learning platform builder AI for courses, or a GraphQL API builder AI to expose curricula and progress, treat generation as the first commit-not the final system. Here's a battle-tested playbook to keep latency low, releases safe, and teams confident.

Performance architecture first

- Define SLOs early: p95 read latency ≤ 250 ms, write ≤ 400 ms, error rate < 0.1%.

- Kill GraphQL N+1: apply DataLoader, field-level caching, and persisted queries; disable introspection in prod.

- Cache tiering: CDN for static, edge cache for public queries (TTL 60s), Redis with request coalescing for hot keys.

- Bound the model: cap AI call concurrency, shard prompts by tenant, and log token spend per route to enforce budgets.

- Connection hygiene: use a pool (e.g., 20-50 per node), prefer server-side cursors, and compress JSON with brotli.

- Observe everything: OpenTelemetry traces with GraphQL resolvers as spans; add exemplars linking to logs.

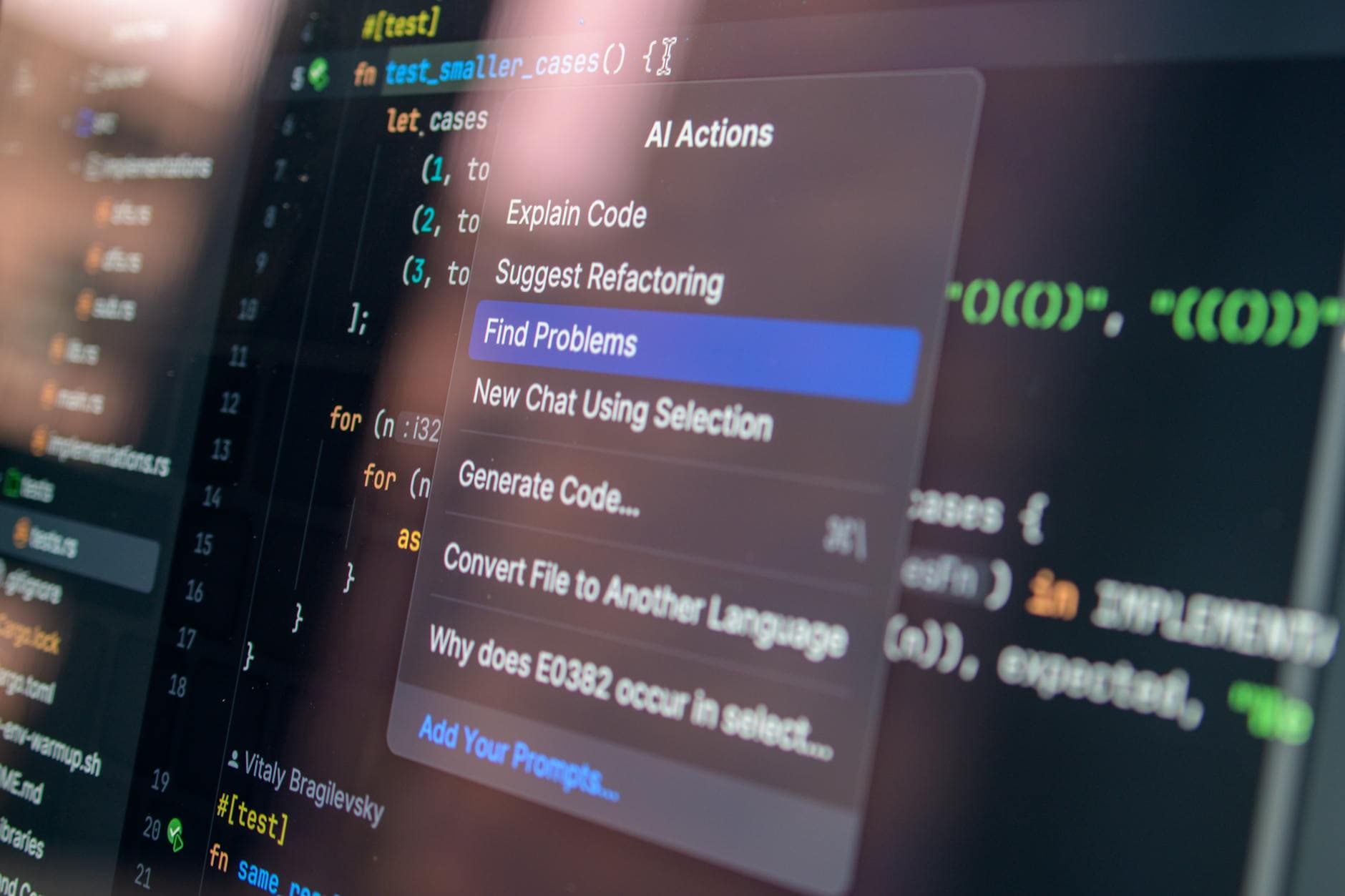

Testing what the AI invented

Generated code shifts risk to integration boundaries. Build a pyramid that proves contracts, not just lines covered.

- Contract tests: snapshot GraphQL schema; fail CI on breaking diffs via graphql-inspector. Add consumer-driven tests for mobile/web clients.

- Property tests: for enrollment rules and pricing tiers, assert invariants (no duplicate seats, refunds ≤ charges) across randomized inputs.

- Golden tests: freeze AI prompts/responses for critical paths (course creation, rubric generation) with redacted PII; rebaseline intentionally.

- Load tests: simulate 5k virtual learners, 50 rps read, 10 rps write; require p95 under SLO and zero 5xx before promotion.

CI/CD that respects data and speed

- Pipeline gates: lint, typecheck, unit, then contract and migration checks (Liquibase/Prisma) with dry-run diffs.

- Build once: create a signed image + SBOM; scan with Trivy; push provenance to registry.

- Ephemeral envs: per-PR deploy with masked datasets; seed minimal tenants; auto-destroy after 24 hours.

- Canary: route 5% traffic; run synthetic GraphQL smoke in 3 regions; auto-roll back on SLO breach for 5 minutes.

- Feature flags: decouple deploy from release; progressively enable AI features per tenant.

Real-world scenario

A global training company used a learning platform builder AI to generate an LMS, plus a GraphQL API builder AI for reporting. By enforcing persisted queries and Redis coalescing, p95 dropped from 480 ms to 190 ms. Contract tests caught a breaking enum change before mobile release. Canary + flags enabled a new text to app platform module to roll out to 12 regions in 48 hours without incident. Costs fell 23% under steady load.

Operational guardrails

- Error budgets: pause feature launches when monthly budget burns > 25%.

- Autoscaling: CPU 60% and queue depth triggers; pre-warm instances before live cohorts.