The 90-Day CTO Advisory Playbook to Production-Grade

You're holding the plan I give founders when velocity matters more than perfection. In 90 days, we move from scrappy MVP to resilient, scalable software with three anchors: AI copilot development for SaaS, React server components implementation, and Grok LLM integration services that won't implode your budget or your compliance posture.

Days 0-7: Define outcomes and guardrails

Ship a thin path, but specify what "good" means. Capture measurable goals and the risks you refuse to accept.

- Product acceptance: one hero workflow end to end, time-to-value under 5 minutes.

- NFRs: p95 latency 300ms web, 1s AI responses, 99.9% uptime, error budget 43 minutes/month.

- Data: multitenant isolation, PII catalog, retention policy, and field-level encryption plan.

- AI eval: golden datasets, offline scoring, live win-rate dashboards, and rollback criteria.

- Scope: must-have features only; everything else behind flags or in the parking lot.

Weeks 2-3: Architecture decisions that stick

Lock the seams early. Use React server components implementation to push data fetching to the server, stream HTML over the wire, and erase client waterfalls. Pair it with a typed backend-for-frontend and an evented core so synchronous UX never waits on slow systems.

- Define RSC boundaries: server components own data access; client components own interactivity.

- Adopt streaming + partial hydration; precompute above-the-fold; defer the rest.

- Create a connector layer with idempotent commands, retries, and circuit breakers.

- Observability first: traces with tenant ids, structured logs, and RED/SLO dashboards.

- RFC everything. Decisions need owners, options, tradeoffs, and a reversal plan.

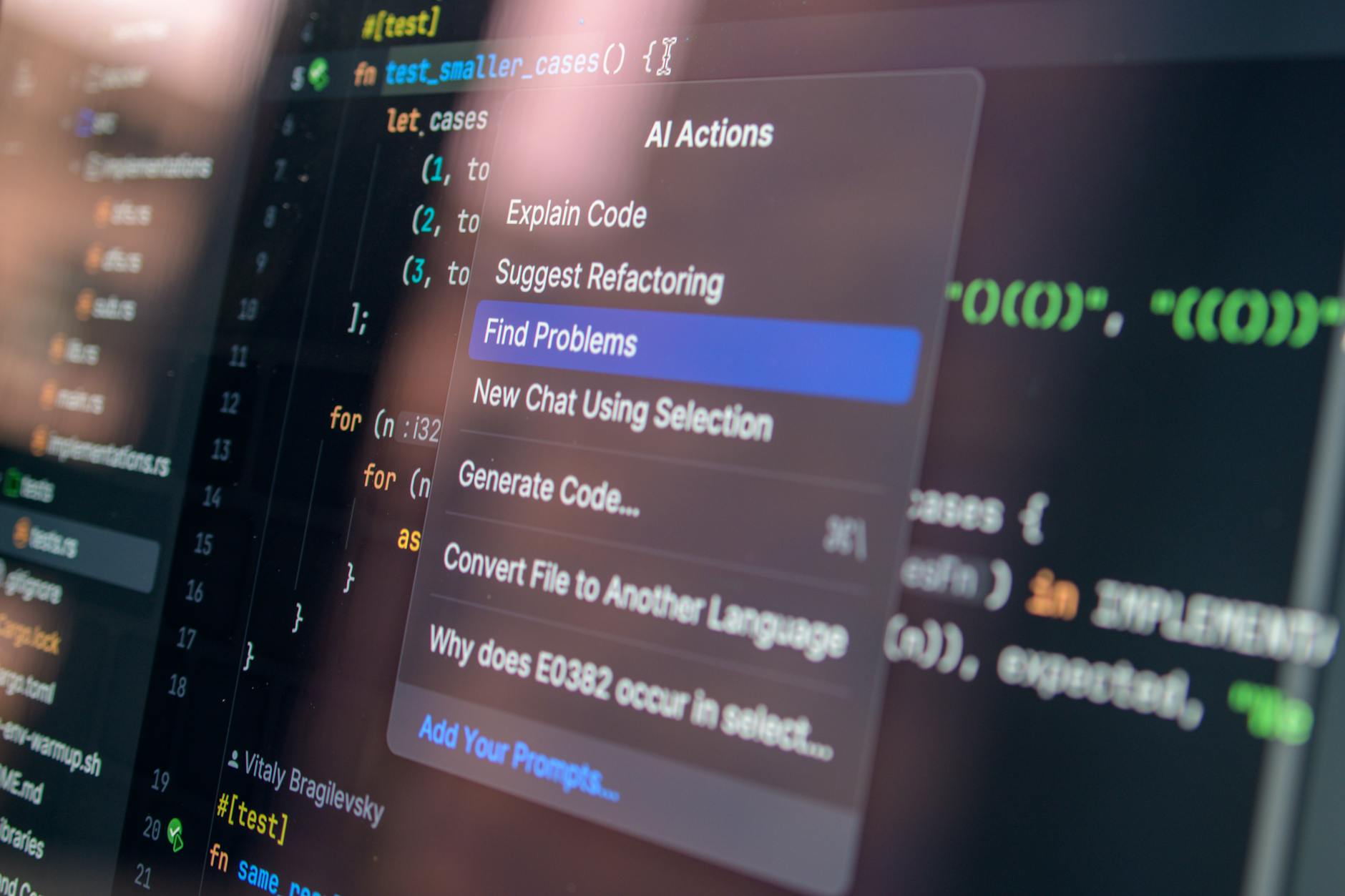

Weeks 4-5: Build the AI copilot slice

Design a single, monetizable assistant workflow. Treat AI copilot development for SaaS as a product, not a feature. Start with a retrieval-augmented pattern, then wire Grok LLM integration services for reasoning and tool use, with deterministic fallbacks when confidence drops.

- Context: tenant-scoped vector stores, plus rules from billing, roles, and feature flags.

- Tooling: define 5-7 typed actions; every tool is idempotent, observable, and rate-limited.

- Safety: prompt templates versioned in Git, PII scrubbing, and deny-list tests per release.

- Quality: offline eval packs, live shadow traffic, and human-in-the-loop triage queues.

- Economics: token budgets by plan; cache embeddings; reuse summaries; batch long jobs.

Weeks 6-7: Pipelines, CI/CD, and governance

Automate the boring and the dangerous. Every change must be reproducible, reviewable, and measurable, including prompts and datasets.

- CI: unit, contract, and synthetic journey tests; eval gates for AI deltas; canary every deploy.

- CD: blue/green with traffic mirroring; instant rollback; feature-flag migrations.

- Infra as code: environments ephemeral by default; seeded fixtures; hermetic builds.

- Security: SSO/SAML, SCIM, secrets rotation, DLP on prompts and completions, tenant keys.

- Data: write-ahead audit logs, GDPR hooks, lineage in your ETL, and access reviews monthly.

Weeks 8-9: Reliability, scale, and cost control

Prove the SLOs in production-like load. Build margins into latency, memory, and spend. Understand where every millisecond and dollar goes.

- Performance: stream first byte under 200ms; cache read-heavy queries; batch N+1 at the source.

- Queues: backpressure and timeouts; isolate slow tools from request threads.

- LLM cost: route simple intents to cheaper models; cap max tokens; preflight with heuristics.

- Resilience: retry with jitter; circuit break third parties; run chaos drills weekly.

- Dashboards: per-tenant costs, prompt win rates, and user time-to-value trends.

Weeks 10-12: Enterprise readiness and launch

Polish the edges customers feel: security, billing, support, and trust. The goal is confidence, not just availability.

- Security reviews: penetration test, dependency audit, and threat model for AI misuse.

- Enterprise: role-based access, delegated admin, export APIs, and guaranteed SLAs.

- Commerce: metered billing for AI calls; overage alerts; annual invoicing flows.

- Support: in-product diagnostics, session replays, playbooks, and on-call rotations.

- Marketing/SEO: schema markup, docs search, shareable AI outputs with canonical tags.

Case snapshot: 90 days, condensed

A B2B analytics startup hit 42% activation lift by launching a copilot that generated first dashboards from CSVs. React server components implementation reduced JS shipped by 38% and cut p95 to 280ms. Grok LLM integration services handled schema inference and tool use; fallbacks covered 14% of queries with deterministic SQL templates.

Tooling you'll thank yourself for

- Design: event storming boards; sequence diagrams for RSC boundaries and tool calls.

- Data: DuckDB for ad hoc tests; LakeFS for reproducible corpora; vector DB with HNSW.

- Quality: prompt unit tests; evaluation harness; red-teaming scripts in CI.

- Experience: analytics with funnel stitching; feature flags with remote config; PX surveys.

- Run: Terraform, Temporal, OpenTelemetry, and a budget dashboard pinned to Slack.

Where to find elite builders fast

If you need a strike team that's done this before, slashdev.io can parachute in senior engineers and product leaders. They blend remote velocity with agency discipline so you get battle-tested patterns, clean handoffs, and real business outcomes, not slideware.

Pitfalls to dodge

- Over-building the client: let the server stream; minimize state and hydration.

- Ignoring evals: without goldens and win-rate targets, AI feels like tricks.

- One model to rule them all: route by task; renegotiate prices.

- Skipping ops: no SLOs, no business-instrument, then optimize.